Last week, Craig Venter created a media frenzy – and a frenzy of bioethical hand-wringing – when he announced the creation of the first “synthetic cell.” In reality, his team of researchers had created the first synthetic genome, the operating system of the cell. They had, in effect, switched the operating system of a particular cell to a new operating system that they had synthesized and edited.

Though many of the headlines talked of Venter being God and having created life in the lab, that is not an accurate way to describe it. Venter started with a particular naturally occurring cell and effectively, de-compiled, analysed and then painstaking edited and reassembled that cell’s genome to create a version of the cell never found in nature. Researchers had already synthesized the genome of the polio virus, creating a genome that would actually “produce” a live virus that infected mice in the lab, but the size of that initiative was several orders of magnitude smaller. The significance of what Venter’s team did lay in the scale of the enterprise and the mastery of the code that it demonstrated. It is as if I took your computer, copied the operating system, figured out what each part of that system did, pruned, cut and edited its functions, and then reloaded a substantially edited system back into the computer – a version which actually proved capable of running it.

Why do this in the first place? Why create a cell with a synthetic genome? Synthetic biology is a term with lots of meanings, but at its most imaginative and inventive, it is striving to move from genetic tinkering to genetic engineering. At the moment we have lots of examples of artisanal editing of genetic code, splicing a gene for luminosity taken from jellyfish into a tomato plant, say, or creating a transgenic goat which secretes spider silk, or insulin in its milk. To an outsider these examples seem impressive (and sometimes creepy) enough. But according to the synthetic biologists, we are still largely at the stage of medieval artisans hand crafting objects; the artisan’s workshop produced impressive creations, but there were no standard screws, valves, gauges, no “off-the-rack” components, no assembly line.

Synthetic biology seeks to remedy that deficiency, to provide the standard platforms for all genetic engineering, so that the next researcher who wishes, say, to create a biofuel with low carbon emissions will be able to use a standard synthetic cell line, the genome of which is completely known, edited so that no unwanted functions remain. When you turn on your computer to finish that essay, or complete that spreadsheet, you do not first have to write an operating system – it comes already loaded. The code writers of synthetic biology want to provide you with something very similar and, just as with computer operating systems, there are reasons to believe there will be strong network effects – markets will tip towards standardization and those who control these basic biological tools will thus gain considerable market power.

In an article written for the journal PloS Biology in 2007, my colleague Arti Rai and I explored the likely legal future of synthetic biology. We found reason to worry that precisely because synthetic biology looks both like software writing and genetic engineering, it might end up combining the expansive patent law aspects of both those technologies, with the troubling prospect of strong monopolies being created over the basic building blocks of science itself. Some of the patents being filed are astoundingly basic, the equivalent of patenting Boolean algebra right at the birth of computer science. With courts now reconsidering both business method and perhaps software patents, and patents over human genes, the future is an uncertain one.

In the world of software, the proprietary model faces competition from open source alternatives, free both in price and in that their code is openly available and can be scrutinized and rewritten. Internet Explorer competes with the open source browser, Firefox. Microsoft dominates the desktop operating system market but there is a Linux alternative. Microsoft web server software competes with (and trails) the open source offerings from Apache and others. The same is true in the world of synthetic biology. The Biobricks Foundation is a nonprofit founded by scientists who are keenly aware of the parallels to the software world. They want to create an open source collection of standard biological parts, to make sure in other words, that the basic building blocks, the standard tools of this new world of biological science, remain “open” in a scientific commons. But their efforts, too, are rendered uncertain by the threat of overbroad patents on foundational technologies.

Innovation in synthetic biology has the potential to produce extraordinary scientific advances, helping to cure diseases, to engage in benign environmental engineering and biofuel development and much, much more. Patents will have an important role to play in that process – they will encourage investment and commercialization in ways that are socially beneficial. But patents that are handed out at too fundamental a layer could actually hurt science, limit research and slow down technological innovation. This is where the sloppiness of the reporting about the creation of artificial life has hurt public debate. The danger isn’t that Craig Venter has become God, it is that he might become Bill Gates. We do not want a monopolist over the code of life.

(Published in the Financial Times: May 27 2010 22:32 | Last updated: May 27 2010 22:32 The FT is enlightened enough to let me keep copyright in the articles I write. Please consider rewarding them by looking at the other articles in the New Economy Policy Forum.)

James Boyle is William Neal Reynolds Professor of Law at Duke Law School and the author of The Public Domain: Enclosing the Commons of the Mind.

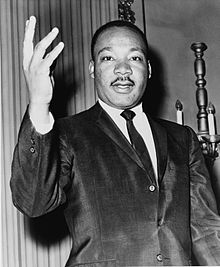

“The danger isn’t that Craig Venter has become God, it is that he might become Bill Gates.”

I find it a little incongruous that you’ve chosen to single Gates out here, who is, let’s face it, a man responsible for co-founding a foundation which currently accounts for 17% of the annual global spending on the attempt to eradicate polio, with similar amounts going towards HIV, TB and leishmaniasis research.

I would contend that, these days, Steve Jobs would be a better model for the role of monopolist in your story.

Overbroad patents on foundational technologies certainly are a danger to technology advancement — in the US. We shouldn’t confuse the world of US patents with the world of science, which is truly world-wide. Although Venter’s group may be the first they will soon be followed by others with, or without, Venter’s permission. I also think the analogy to computer operating systems is flawed. For an OS, such as Microsoft Windows, the distributed product is a binary executable rather than the source code. The genetic code is the source code. Could Microsoft have maintained it’s OS monopoly anywhere near as well if it had been necessary to distribute the source code to every customer?

I take your point. Gates’ philanthropic spending is incredibly laudable. And Apple’s closed platforms are indeed worrisome. Still Apple doesn’t yet have a monopoly in any area with network effects as strong as those in the desktop OS world. And — remember — this is an op ed. Saying “the danger is he is Steve Jobs” would simply not have conveyed what I was trying to say, at least to 90% of the audience.

“Still Apple doesn’t yet have a monopoly in any area with network effects as strong as those in the desktop OS world.”

That’s true – it’s all too easy to believe that Apple is on the verge of girdling the globe with iPhones and iPads, which it isn’t. I agree, the objective truth is that Microsoft is still dominant on the desktop.

[…] Boyle, in an editorial for the Financial Times, also warns for the dangers of monopoly over the code of […]

Speaking as a Geneticist and soon-to-be would-be synthetic biologist, I share your worries. It is fortunate that the “Source Code” of life will have to be a part of distributed technologies, but if the patent problem keeps things five years behind such broad distribution simply because of the cost or because it’s kept between corporations only for a while, then the damage is done.

Here’s hoping for an open synth-bio future!

[…] Monopolists of the genetic code […]