James Boyle

My new book, The Line: AI and the Future of Personhood, will be published by MIT Press on Oct 22 2024 under a Creative Commons License. MIT is allowing me to post preprint excerpts. The book is a labor of (mainly) love — together with the familiar accompanying authorial side-dishes: excited discovery, frustration, writing block, self-loathing, epiphany, and massive societal change that means you have to rewrite everything. So just the usual stuff. It is not a run-of-the-mill academic discussion, though. For one thing, I hope it is readable. It might even occasionally make you laugh. For another, I will spend as much time on art and constitutional law as I do on ethics, treat movies and books and the heated debates about corporate personality as seriously as I do the abstract philosophy of personhood. These are the cultural materials with which we will build our new conceptions of personhood, elaborate our fears and our empathy, stress our commonalities and our differences. To download the first two chapters, click here.

The Line: AI & The Future of Personhood

My new book, The Line: AI and the Future of Personhood, will be published by MIT Press on Oct 22 2024.

Chatbots like ChatGPT have challenged human exceptionalism: we are no longer the only beings capable of generating language and ideas fluently. Chatbots are not conscious. But what happens in the future if the claims to consciousness are more credible? In The Line, James Boyle explores what these changes might do to our concept of personhood, to “the line” we believe separates our species from the rest of the world, but also separates “persons” with legal rights from objects.

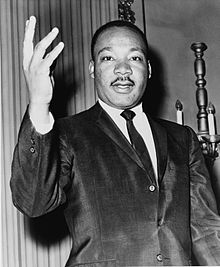

The personhood wars—over the rights of corporations, animals, over the question of when life begins and ends—have always been contentious. We’ve even denied the personhood of members of our own species. How will those old fights affect the new ones, and vice versa? Boyle pursues those questions across a dizzying array of fields. He discusses moral philosophy and science fiction, transgenic species, nonhuman animals, the surprising history of corporate personality, and AI itself. Engaging with empathy and anthropomorphism, courtroom battles on behalf of chimps, and doom-laden projections about the threat of AI, The Line offers fascinating and thoughtful answers to questions about our future that are arriving sooner than we think. To sneak a peak at the first two chapters, click here.

Praise for The Line

“James Boyle has always seen through the technology, to the values we must hold as humans. That gift is just what understanding the emerging technologies of AI will require, and what this book gives. Beautifully clear, intellectually compelling, this book is the best way of thinking through the line that divides us from it, or them. Can we hold the line? Should we? Much depends on the answers.” Larry Lessig, Harvard Law School

“Deeply thoughtful and witty as hell, Boyle’s exploration of the ‘line’ is an absolute must-read in our budding era of machine intelligence.” – Kate Darling, research scientist, MIT; author of The New Breed

“Are technologically created artifacts things or persons? In this brutally honest and timely book, James Boyle demonstrates how questions that had once been considered science fiction are now a very real and urgent matter.” – David J. Gunkel, Northern Illinois University; author of The Machine Question, Robot Rights, and Person, Thing, Robot

James Boyle is the William Neal Reynolds Professor of Law at Duke Law School, founder of the Center for the Study of the Public Domain, and former Chair of Creative Commons. He is the author of The Public Domain and Shamans, Software, and Spleens, the coauthor of two comic books, and the winner of the Electronic Frontier Foundation’s Pioneer Award for his work on digital civil liberties.

More praise for The Line:

“James Boyle has been thinking about artificial intelligence and personhood for as long as anyone in the academy. The Line is a must-read—the culmination of Boyle’s meticulous research and characteristically insightful analysis on a critical issue of our time.” – Ryan Calo, Lane Powell and D. Wayne Gittinger Professor of Law, University of Washington; cofounder, We, Robot.